We're getting an issue with our SpecFlow 3.1 tests running in parallel across multiple NCrunch nodes with many threads available

the execution is held up by 1 or 2 processes that run multiple tests, from multiple features / folders, with different tags

this increasing our test time from 20 mins to 60+ mins

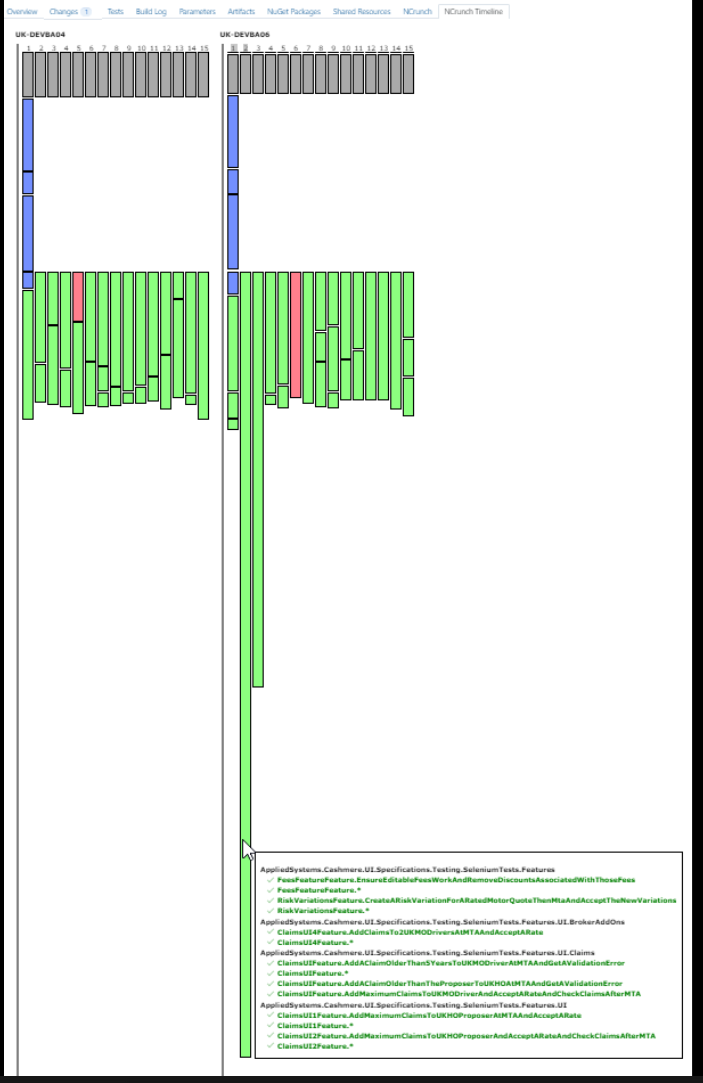

i've scaled down the timeline by a factor of 40 to fit into a screenshot

there's 1 process taking over 50 mins to run 8 tests from 4 features with different tags

the longest test it runs is under 14 mins

other processes have finished after 10 mins and lay idle.

i noticed that the 2 really long processes show as a single block for multiple tests in multiple features in the timeline

while the other processes divide into multiple blocks, running only 1 test per block in the timeline

is there anything that would cause NCrunch to group those tests into a single block that cant be spread out across multiple processes?